Pay-per-Use Compute Jobs: The Practical Alternative to GPU Renting

GPUs are some of the most expensive infrastructure in modern computing. Yet in many AI workflows, they spend most of their time doing nothing.

Training runs finish. Evaluation pipelines pause. Engineers review results. Models get adjusted. Hours, or sometimes days, pass before the next workload begins.

Meanwhile, the GPU remains provisioned and the billing meter continues running. This mismatch between how AI workloads behave and how infrastructure is priced is where a significant portion of AI infrastructure cost quietly accumulates.

Pay per use compute is designed to eliminate that mismatch. Instead of paying for machines that remain allocated around the clock, you pay only for the time during which actual computation occurs. This model is increasingly discussed as pay per use compute jobs, pay per use GPU compute, or pay as you go compute in modern AI infrastructure pricing.

For many teams, that difference can dramatically change the economics of running AI workloads and directly impact overall GPU compute pricing.

What Pay Per Use Compute Actually Means

In a pay per use model, billing begins only when a job starts executing and stops the moment it finishes.

There is no idle machine sitting online, no reserved GPU accumulating hourly charges, and no long term capacity commitment. You pay only for runtime.

Traditional GPU rental works differently. When a GPU instance is provisioned on a cloud platform, billing begins immediately and continues until the machine is manually shut down. Whether the GPU is fully utilized or waiting for the next task, the clock keeps running.

This pricing structure is why many teams begin comparing GPU rental pricing, GPU compute cost per hour, and new GPU alternative models built around pay per use compute jobs.

A useful analogy is infrastructure vs utilities. Traditional GPU rental is similar to leasing an apartment. Once the lease begins, you pay for the space whether you are inside it or not. Pay per use compute behaves more like electricity, where the meter runs only when energy is being consumed. For AI workloads that operate in bursts rather than continuously, that distinction becomes economically significant.

For many teams evaluating rent GPU vs buy GPU decisions, this model introduces a third option: running workloads only when they actually need compute capacity..

What a Compute Job Actually Is

At its simplest, compute means to calculate. Within Ocean Network, those calculations are packaged into compute jobs.

A compute job is a reproducible execution unit containing:

- Application code

- Dependencies

- Model weights

- Runtime configuration

- Hardware requirements

This package is typically deployed as a container and dispatched to a remote execution node. Once scheduled, the job runs in isolation on the requested hardware tier, consuming GPU cycles, CPU resources, memory, and temporary storage during execution.

When the workload finishes, outputs are produced, results are returned to the developer, and the execution environment is terminated. The infrastructure exists only for the duration of the task. No idle machines left running afterward. This is the core principle behind pay per use compute jobs and modern pay as you go compute infrastructure.

What You Actually Pay For

In a pay per use GPU model, cost is driven primarily by two variables: runtime duration and hardware class.

For example, a modern accelerator such as an NVIDIA H100 might rent for roughly $3 to $4 per hour on major cloud marketplaces. This range is often referenced when comparing H100 pricing or estimating GPU compute cost per hour across cloud providers.

A batch workload that runs for three hours might therefore cost $6 to $12, depending on pricing conditions.

Higher memory accelerators like the NVIDIA H200 typically command higher rates, which is why H200 price comparisons frequently appear in discussions about next generation GPU infrastructure. GPUs such as the NVIDIA A100 usually sit at a lower pricing tier within typical GPU rental markets.

Memory and temporary storage are generally bundled into the hardware tier, meaning that for most machine learning jobs, GPU runtime dominates total cost. The key property remains simple: when execution stops, billing stops.

The Variable That Actually Determines Economics: Utilization

The real infrastructure debate is not simply rent vs buy GPU. It is utilization.

Many organizations evaluating rent GPU vs buy GPU decisions focus on purchase price, but the more important factor is how often the hardware is actually used.

Consider a rented H100 instance priced at $3 per hour. Running continuously for a month costs roughly $2,160. Now imagine your workloads actually run four hours per day. That means the GPU is productive only about 16% of the time. When total spending is divided by productive runtime, the effective GPU compute cost per hour rises dramatically. Most of the expense represents idle capacity.

Example Utilization Scenarios

At low utilization levels, a large portion of GPU spending is simply paying for infrastructure that is not actively performing work.

Owning hardware does not eliminate this problem. It simply shifts the cost from operational expenditure to capital expenditure. A single H100 accelerator can cost tens of thousands of dollars upfront, which is why many teams debating rent vs buy GPU economics also evaluate long term GPU compute pricing scenarios before purchasing hardware. Underutilized hardware becomes dormant capital. Pay per use compute removes that risk by aligning cost directly with execution.

Inference Economics in Context

Much discussion around the cost of running inference focuses on API token pricing, but underneath those APIs lies GPU time.

A sufficiently optimized modern GPU can generate large volumes of tokens within minutes when properly batched. When utilization during execution is high, raw GPU cost per million tokens can be surprisingly low.

What inflates inference cost is not silicon capability but idle capacity. When a GPU remains online waiting for requests, calendar based billing distorts per token economics. Pay per use GPU compute, especially for batch inference or embedding generation, keeps pricing proportional to throughput instead of uptime.

For teams running nightly pipelines, evaluation jobs, or periodic retraining, this alignment can materially reduce operational spend.

When Renting or Owning Still Makes Sense

Persistent GPU infrastructure is not irrational. It becomes logical when workloads are continuous, latency requirements are strict, and utilization approaches saturation.

Real time APIs serving thousands of concurrent requests may justify dedicated machines because queuing delays are unacceptable.

However, many internal AI workloads do not have those constraints. Data preprocessing, synthetic data generation, model evaluation, large embedding refreshes, and scheduled fine tuning runs can tolerate asynchronous execution. In these contexts, paying for continuous availability introduces unnecessary financial friction, which is why many teams now evaluate alternative compute platforms or decentralized compute systems.

The correct choice depends on workload shape, not hype.

How Ocean Implements This Model

Ocean Protocol operationalizes pay per use compute jobs through Ocean Network: a geographically distributed compute network.

Instead of relying on centralized data centers, the network aggregates compute resources contributed by independent operators across the world. These resources include GPUs, CPUs, and storage capacity that might otherwise remain idle.

Each machine participating in the network runs an Ocean Node, which provides the environment responsible for executing containerized workloads. Ocean Nodes form a distributed pool of compute resources capable of running jobs submitted by developers across the network.

This architecture transforms underutilized global hardware into usable distributed compute capacity and functions as an emerging alternative to cloud GPU infrastructure.

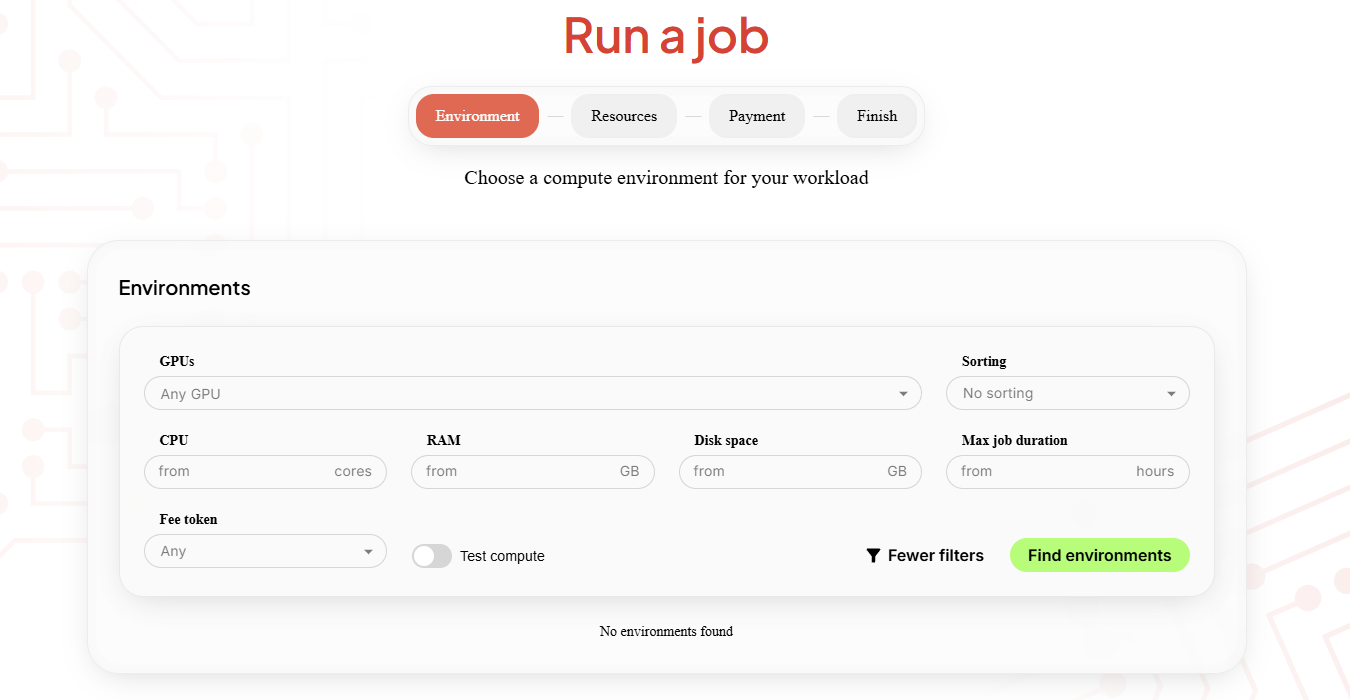

Coordinating a decentralized compute network requires an intelligent coordination layer. Which the Ocean Network has by combining the Dashboard and the Ocean Orchestrator. Let’s unpack:

- Dashboard: here the user can discover the available nodes, across the network. Then, choose the exact specs he needs for his jobs ( GPU/CPU, RAM, Disk Space, time duration etc.). What happens next is that the dashboard will display available hardware, which the user selects. Finally, once matched, the job is dispatched to the selected node, where it runs inside an isolated containerized environment.

- Ocean Orchestrator: functions as the coordination engine between developers and available compute resources.

Its responsibilities include:

- monitoring runtime status

- coordinating job lifecycle events

This orchestration layer allows the Ocean Network to behave like a unified compute platform, even though the underlying infrastructure is geographically distributed.

How to Evaluate It Rationally

The best way to evaluate pay per use compute is to test it with a real workload instead of relying only on estimates. Choose a measurable task such as a batch training job or a full embedding run, and record how long it runs, what GPU tier you used, and the total cost.

Then compare that number against what you would pay to keep a rented GPU online for the same period. The difference between those two totals is informative. A large gap suggests your workload runs in bursts and may benefit significantly from pay per use GPU compute. A small gap suggests high utilization, where keeping infrastructure running continuously could make more sense.

The Strategic Takeaway

The infrastructure question is not whether GPUs are expensive. It is whether your utilization pattern justifies continuous allocation.

Pay per use compute jobs convert GPU access from a fixed commitment into a variable operational expense aligned with actual execution. For teams with episodic or batch oriented workloads, this model can function as one of the most cost effective approaches to GPU compute while also acting as a practical alternative to cloud GPU platforms.

It reduces idle waste, improves cost transparency, and lowers capital exposure without sacrificing capability.

Ready to test your workload? You can get started in minutes.

Head to the Ocean Network Dashboard, where you can run test jobs to verify your algorithms and receive complimentary COMPY tokens to get started. Then install the Ocean Orchestrator extension, initialize a project to generate the compute scaffold, select a GPU node, and submit your job directly from your editor. Results stream back automatically once the run completes.

You can pay via your crypto wallet using USDC on Base, or use the fiat on ramp to get started with a credit card.

Follow us on X at @ONcompute to stay updated on upcoming features and announcements.